In a recent post from Flagright, the company outlined the biases and blind spots of risk scoring algorithms.

The financial domain has consistently pivoted around numerical figures, encompassing elements like stock prices, interest rates, credit scores, and profitability metrics.

However, the past few decades have witnessed a monumental shift in how these figures are deduced, scrutinised, and implemented. This transformation is principally attributed to the emergence of risk scoring algorithms.

Historically, processes to assess financial risk were executed manually. For instance, loan officers would largely depend on paper records, personal interviews, and their own intuition to evaluate an individual’s creditworthiness. But with the escalation in transaction volumes and the intricacy of financial instruments, it was evident that a more systematic, efficient, and data-centric method was indispensable. This necessity gave birth to risk scoring algorithms. The maiden generation of these algorithms was mostly governed by set rules, with uncomplicated criteria determining actions such as loan approvals. A candidate with a particular credit score, a steady job, and devoid of any default history would typically be considered low risk.

The digital metamorphosis spanning the late 20th to early 21st century accentuated the requirement and feasibility for sophisticated risk assessments. With the proliferation of e-commerce and the normalization of digital banking, manual evaluations became unfeasible due to the sheer volume of transactions. Furthermore, the digital sphere introduced novel financial risks, primarily related to fraud. Solutions emerged in the form of machine learning (ML) and artificial intelligence (AI). These innovations, with their ability to rapidly process colossal data sets, spearheaded the next generation of risk scoring algorithms. These contemporary models adapted and honed their risk evaluations continuously, learning directly from the data instead of static regulations.

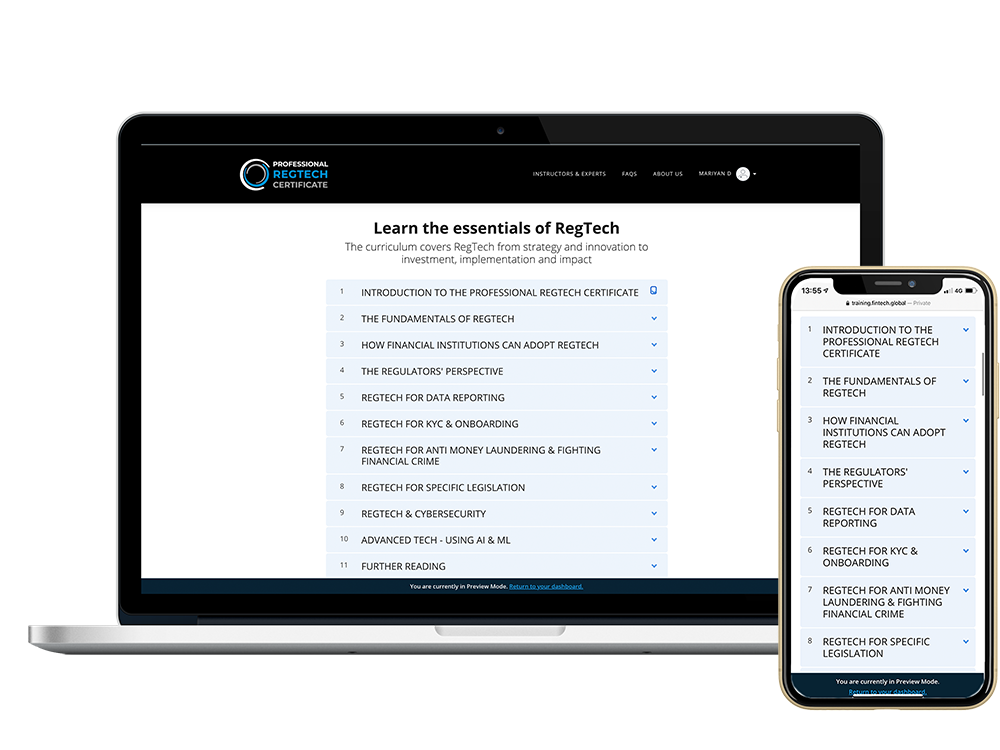

Currently, risk scoring algorithms have permeated nearly every aspect of the financial sector. They play crucial roles in real-time transaction monitoring to detect anomalies that might suggest fraud or money laundering, assessing customer risk to determine potential defaults or late payments, verifying the identity and authenticity of businesses and individuals, and even sanctions screening to avert unintended associations with blacklisted entities or persons.

The finance industry has warmly embraced these tools, lured by their prospects of cost reductions, heightened efficiency, and superior risk prediction accuracy. Customers have also reaped the benefits, experiencing faster loan approvals and more custom-tailored financial products congruent with their risk profiles. Nevertheless, risk scoring algorithms, like any tool, are not devoid of flaws. Their accelerated integration into financial mechanisms has surfaced challenges, notably around biases and blind spots, which we’ll explore subsequently.

These algorithms aren’t merely mathematical computations; they’re complex systems designed to dissect, interpret, and forecast based on extensive data sets. Their essence lies in their capacity to rapidly analyse massive data, rendering predictions that would be onerous or impossible for humans to formulate expediently. Yet, their detailed mechanics also pinpoint potential vulnerabilities, from data biases to model presumptions.

Despite their advanced capabilities, risk scoring algorithms aren’t impervious to flaws. Inherent challenges arise, chiefly around data bias, historical bias, over-reliance on quantitative figures, feedback loops, and issues related to transparency, generalization, and real-world testing. Recognising and addressing these challenges are pivotal to enhancing these algorithms’ efficacy and fairness.

Read the full post here.

Copyright © 2023 RegTech Analyst

Copyright © 2018 RegTech Analyst